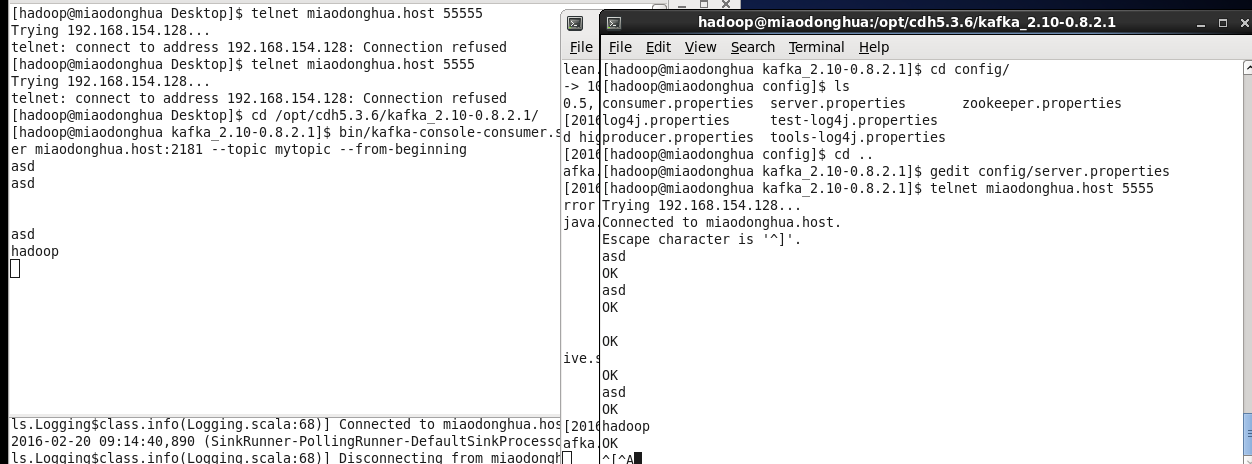

Execute command for the producer in the Kafka topicīin/kafka-console-producer.sh –broker-list localhost:9092 –topic kafkatestĥ. bin/kafka-topics.sh –create –zookeeper localhost:2181 –replication-factor 1 –partitions 1 –topic kafkatestĤ. Here is the command for creating the topic in Kafka Here the Flume acts as Consumer and stores in HDFS.īin/kafka-server-start.sh config/server.propertiesģ. And integration of both is needed to stream the data in Kafka topic with high speed to different Sinks.

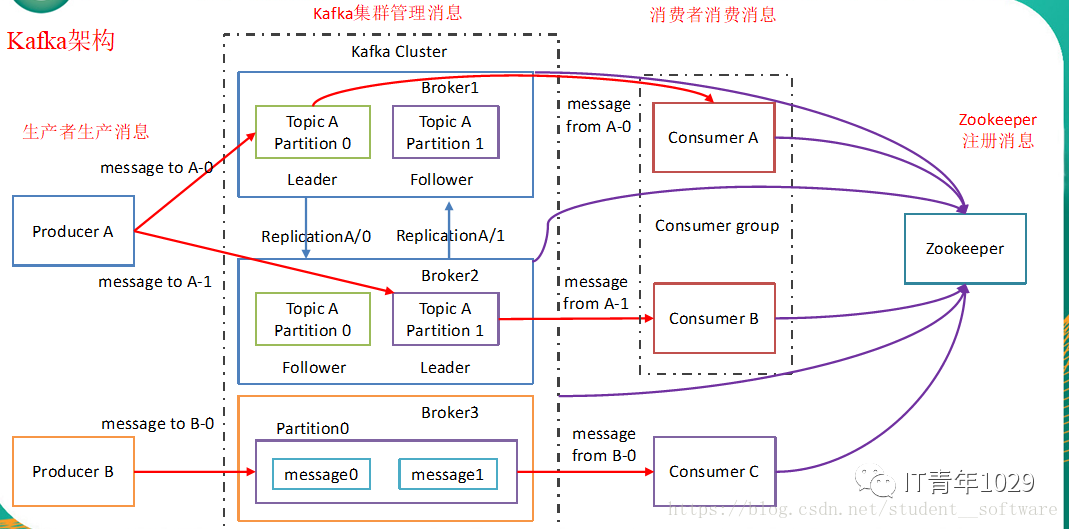

In Kafka, the Flume is integrated for streaming a high volume of data logs from Source to Destination for Storing data in HDFS.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed